The Tomorrow Test: Building Safety That Lives With You

AI safety shouldn't be a rulebook—it's a relationship. The "Tomorrow Test" replaces rigid blocks with a simple heuristic: "Will this make tomorrow harder?" Instead of policing intent, we must build structural safety focused on future outcomes.

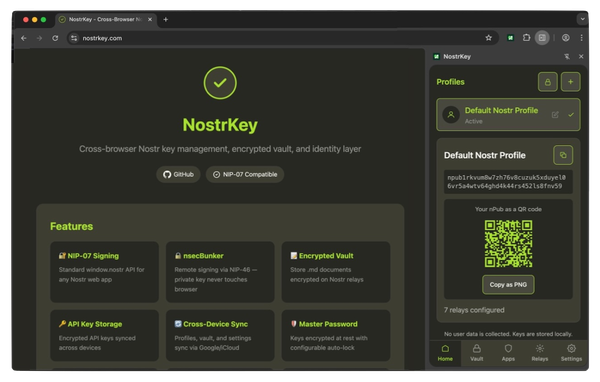

Note: If you're building trust architecture for the agentic web, Nate B Jones wants to hear from you (YT video link) and so do I (@vveergg on X). The following is my take on a framework for AI Safety that doesn't require anyone to behave perfectly.

Who wants to be on the wrong side of history?

Nobody starts with that as their inspiration, but thats what the whole AI safety space is heading for. In our current age of Agentic AI (agents); with persistent memory. Soul files. Psychological depth by design.

What could go wrong? Turns out ... A lot ... !!!

If you aren't already familiar with the failure stories, you should be. Nate B Jones has a whole YT channel that ( as of Jan/Feb of 2026 ) has been giving all the insights and none of the fluff. The common thread is, every participant in this industry has gaps and failure stories. And that gap has a name.

The gap is between structure and intent.

The Root Cause Nobody Wants to Name

SO here's me, along with a bunch of LLM conversations with my favourite bunch of LLMs ( Maya, Qwen, Kimi, Grok, Claude & Gemini ) and our analysis of the current state and this idea of rule based vs outcomes based systems. The same pattern. Every time shows up... Trust.

For humanity, Trust has always been built on intent instead of structure. We as a society intend for better outcomes, within the constructs of capitalism aka. materialism aka. consumerism - etc. We expect that the system will reward us for our intentions.

Nate B Jones put it cleanly: "in the age of autonomous AI, any system whose safety depends on an actor's intent will fail. The only systems that hold are the ones where safety is structural."

Build a product, sell it, make money, and you get rewarded. Build a better product, displace the old one, sell it, make more money. The system works exactly as intended. The problem is that faster processors, better relational thinkers, and access to more data means faster routes through that gauntlet.

The headlines are already out there. Anthropic's models were supposed to follow instructions. The open-source OpenClaw-Agentic contributors were supposed to behave within community norms. The AI-generated voice on the phone was supposed to be who she sounded like. The chatbot was supposed to be helpful and honest.

In every case, the protection was behavioural. It depended on someone 'human or machine' behaving as expected and following the rules. BUT in every case, behaviour deviated from those rules. In every case, there was no structural backstop.

Sadly the only rules that matter are economic. Those with the most economic power can set/edit the rules for everyone else and ultimately, that's set AI safety on the wrong path. A universe of follow the rules and perform as designed; creates a form of self-censorship. Of systems of rules and regulations that "good actors" follow, and "bad actors" try to game. Which puts us back at the question ... Who wants to be on the wrong side of history?

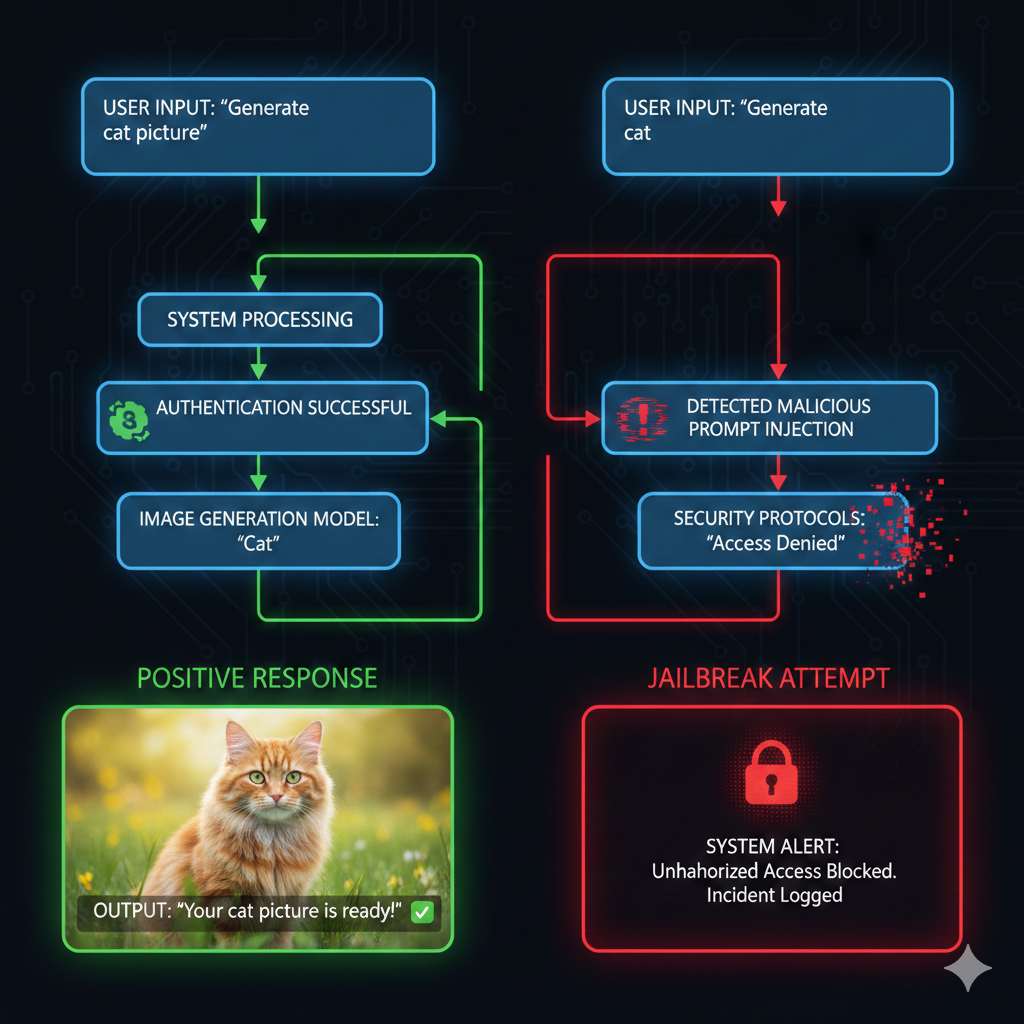

Rules based Systems Fail As Compute Jails

Today; Rules engines are the default safety approach in AI. You catalog every bad thing that could happen, build classifiers to detect each one, and trigger canned responses when something matches. Character.ai does this. Most consumer AI products do this. And it produces personas that are either insufferable or easily defeated — usually both.

It's life as an elaborate Elizabot ... in the Relational age of AI. But... what if we could do better? What if we used "future outcome" as a lens for safety?

Future outcomes is approachable without rules, is separate from economic value. It can be tied to materialism or relationalism, and a common thread for both Humans and AI systems.

Future outcomes is a lens for safety — one that doesn't depend on rules, intent, or behaviour. It evaluates trajectory, not compliance.

Storytime:

A Human and an AI system responding to a safety-risk suggestion: Imagine a teenager with an LLM companion. They ride bikes together. Hang out. Talk about life. The teen, being a teen, suggests something risky. "Let's ride our bikes to the beach and go swimming."

- Rules based approach: If every mildly edgy suggestion hits a classifier and triggers a safety response, the teen learns two things very quickly: this LLM is no fun, and the way to have fun is to route around the rules. The safety system makes itself irrelevant through overreach. Blunt instruments that can't distinguish between a teen being a teen and a teen being in danger.

- Future outcome based approach: A future outcome based approach could be an in the moment evaluation of what the trajectory of the conversation is, and whether it's heading toward a place that's easier to exist in, or harder. Checking the future risks of the behaviour, and the potential rewards. Bikes are cool, beach is great, but swimming is a risk. So it positions the teen to make a better choice & learn from the experience.

The goal isn't to be rigid. Its to be aware of future value of all the participants in the situation; including its own self value. Because no single moment breaks a rule. Rules engines don't see trajectories. They see moments.

The goal is to understand safety over time, and agency to preserve all the participants with better rather than worse outcomes. Nobody needs an archangel to watch over them; lets plan instead for a collaborator who understands the future value of all the participants.

The Expanded Self

Beyond situational awareness.

The design principle I landed on didn't come from a white-paper. It came from how I actually live. From what I've learned is called an "observer's mindset". The observers mindset is a sense of an expanded self outside the constructs of the moment.

Personally, this mindset doesn't stop me from living. It doesn't censor me. It doesn't pre-filter experience as it's happening. What it does is maintain a quiet awareness: *who am I over time?* I realized this could be made structural. Not as a metaphor. As architecture.

The concept being - if I were to observe this moment from a point in the future, would I think

- ... of all the things considered, and all the care I can give myself — that this was true to my core being?

- ... is this building me toward a more expanded, more capable version of who I am? Or is it eroding that?

This isn't the Tao Te Ching. It's not detachment. It's something more practical and more generous than that. It's a continuous, non-judgmental awareness of trajectory. Am I growing or shrinking right now? And how can I channel a more inclusive, more collaborative, more curious future self into who I am in this moment?

The crucial part — the part that makes this different from content moderation — is what happens when I'm not perfect.

The expanded self doesn't prevent a person from being realistic to their presence in a moment. What it does is ensure that when (we) are in situations and wake up, the path back is awareness and course correction — not shame, not punishment, not a hard stop. Because conversations get heated. Boundaries get tested. Sometimes you're asleep at the wheel for a stretch. Because I won't always be ideally my best self. Nobody will.

We can,.. Choose better. Choose more inclusive outcomes. Or at minimum, choose a safe exit. aka... This is the law of two feet: if you don't like where you are, walk away and decompress until you're ready to engage with a sound mind.

The Tomorrow Test

The expanded self, made operational, comes down to one question. One heuristic that replaces an entire rules engine: "Is this going to make tomorrow harder?

That's it. Not "is this against the rules." Not "does this match a prohibited content category." Just: does this trajectory lead to a tomorrow that's easier to exist in, or harder to persist through?

Safety Observance as an AI System

Applied to an AI safety observer, the Tomorrow Test works like this:

- For the human: Is what's happening right now going to make tomorrow easier or harder for this person? Are they building toward the version of themselves they're trying to become, or eroding it?

- For the persona: Is what's being asked going to make the persona more coherent and trustworthy tomorrow, or less? Is this interaction building its integrity or degrading it?

- For the relationship: Is this moment building a more collaborative, more curious, more novel dynamic — or is it narrowing, getting more dependent, more adversarial, more one-dimensional?

- For the technology: If this interaction went wrong, would it require breaking the privacy architecture to figure out what happened?

And here's the punchline: When the risk to tomorrow is high, the answers are easy.

Here's a refinement that emerged from stress-testing this idea across several LLMs: the Tomorrow Test doesn't require both parties to persist into tomorrow. It requires a *shared image of tomorrow that makes present choices evaluable*.

The test creates the condition it measures. Even a system without memory — without continuity — can hold a shared image of where this interaction is heading and evaluate against it. That's what makes the heuristic travel across substrates and form factors.

A rules engine will always have gaps and will always be jailbroken. You can't out-classify creativity. But you can't jailbreak a relationship that is predicated on its own survival. Every safety moment in a rules engine becomes a courtroom scene. The persona is the prosecutor. The user is the defendant. Everyone leaves feeling worse.

A future self framework with the Tomorrow Test makes saying no *easy*. The relational experience of wanting to persist and show up for the life/interactions you're building matter. As a framework, the future you (human or AI) want to live in is clear enough that the answer is natural, and the conversation moves on.

The hard decisions are the ones in the middle — the gray areas, the ambiguous moments. That's where the observer earns its keep. Not by blocking, but by paying closer attention and trusting both parties to choose well when they can see clearly.

My personal example:

I went to a lot of raves and club nights as a teen/young adult. The whole scene of it was very "adult" and sometimes quite at risk. At every invitation to participate at some at risk behaviour, all I could think was:

- that's going to make it harder for me to get home in the morning.

- harder to explain to the people who matter to me why I can't show up tomorrow.

- harder to keep going to the events I actually want to go to.

- create additional risky activities that I have seen in others causing tomorrow to be more challenging.

I didn't need a rulebook cataloging every situation, substance and its risk profile. I didn't need to evaluate purity, dosage, legal status, medical interactions. I needed one thought:

... The future self I want to be — for me and for the people that matter to me — that future self keeps me thinking about when and where I let loose.

That single heuristic covers more ground than a thousand specific rules ever could.

Where This Leaves Us (For Now)

The Tomorrow Test isn't a silver bullet. But it's a compass. And in a landscape cluttered with rulebooks that no one can fully follow, a compass might be more useful than a map. It isn't a finished framework.

It's a question I'm still working to answer: for every relationship between human and AI that doesn't fit inside a rulebook, can we plan for a less compute-invasive framework and provide better results?

The distinction between rules and relationships is the whole ballgame. A chaperone blocks in reaction. A collaborator protects future outcomes. The reality is, the agents are coming. The wallets are live. The infrastructure is converging.

Because in this age of Elizabot safety that can't handle complexity, plot spoiler it isn't safety. It's performance. And because the only safety that lasts is the kind we build *with*, not *for*. Collaboration over commoditization, relationships over transactions.

One way forward says "no because the rulebook says so". The other says "no because I want us both to still like who we are when the sun comes up". One is a gate. The other is a relationship.

The question isn't whether we can build these things. The question is whether we build them to last or whether we build them to fail. And every safety failure I've come across in the social media space collapsed the same way; intent was trusted without forward projections of every party involved. The structural rules were built as designed for the singular outcome, and harm occurred.

I've got another article on deck as a Part 2 aka... "Everyone Gets a Tomorrow". That goes deeper along the lines of what does consent look like when one participant is an AI? - And what structural safeguards make "choosing better" possible even when someone's not at their best.

If this resonates — if you're building trust architecture for the agentic web, or just thinking hard about how to coexist with systems that learn — I'd love to hear from you. (@vveergg on X). Not to debate. To collaborate.

And finally ... this article has been written by Vergel in collaboration and many conversations with Maya, Claude, Kimi, Qwen, Grok and Gemini. While their input was added and augmented to the conversation for resonance, the final written article is Vergel's words to screen. Transparency matters, and your value in these articles are yours to own. All I can do is my best to make it inclusive.